Subsections of Simplified FortiGate Autoscale Template

Introduction

Welcome

This documentation provides comprehensive guidance for deploying FortiGate autoscale groups in AWS using the FortiGate Autoscale Simplified Template. This template serves as an accessible wrapper around Fortinet’s enterprise-grade FortiGate Autoscale Templates, dramatically reducing deployment complexity while maintaining full architectural flexibility.

Purpose and Scope

The official FortiGate autoscale templates available in the terraform-aws-cloud-modules repository deliver powerful capabilities for deploying elastic, scalable security architectures in AWS. However, these templates require:

- Deep familiarity with complex Terraform variable structures

- Strict adherence to specific syntax requirements

- Extensive knowledge of AWS networking and FortiGate architectures

- Significant time investment to understand configuration dependencies

The Simplified Template addresses these challenges by:

- Abstracting complexity: Encapsulates intricate configuration patterns into intuitive boolean variables and straightforward parameters

- Accelerating deployment: Reduces configuration time from hours to minutes through common-use-case defaults

- Maintaining flexibility: Retains access to advanced features while providing sensible defaults for standard deployments

- Reducing errors: Minimizes misconfiguration risks through validated input patterns and clear parameter descriptions

What This Template Provides

The Simplified Template enables rapid deployment of FortiGate autoscale groups by simplifying configuration of:

Core Infrastructure

- Network architecture: VPC creation or integration with existing network resources

- Subnet design: Automated subnet allocation across multiple Availability Zones

- Transit Gateway integration: Optional connectivity to existing Transit Gateway hubs

- Load balancing: AWS Gateway Load Balancer (GWLB) configuration and target group management

Autoscale Configuration

- Capacity management: Minimum, maximum, and desired instance counts

- Scaling policies: CPU-based thresholds and CloudWatch alarm configuration

- Instance specifications: FortiGate version, instance type, and AMI selection

- High availability: Multi-AZ distribution and health check parameters

Licensing and Management

- Licensing flexibility: Support for BYOL, PAYG, and FortiFlex licensing models

- License automation: Automated license file distribution or token generation

- Hybrid licensing: Configuration for combining multiple license types

- FortiManager integration: Optional centralized management and policy orchestration

Security and Access

- Management access: Dedicated management interfaces or combined data/management design

- Key pair configuration: SSH access for administrative operations

- Security groups: Automated creation of appropriate ingress/egress rules

- IAM roles: Lambda function permissions for license and lifecycle management

Egress Strategies

- Elastic IP allocation: Per-instance EIP assignment for consistent source NAT

- NAT Gateway integration: Shared NAT Gateway configuration for cost optimization

- Route management: Automated routing table updates for egress traffic flows

Common Use Cases

This template is specifically designed for the most frequently deployed FortiGate autoscale architectures:

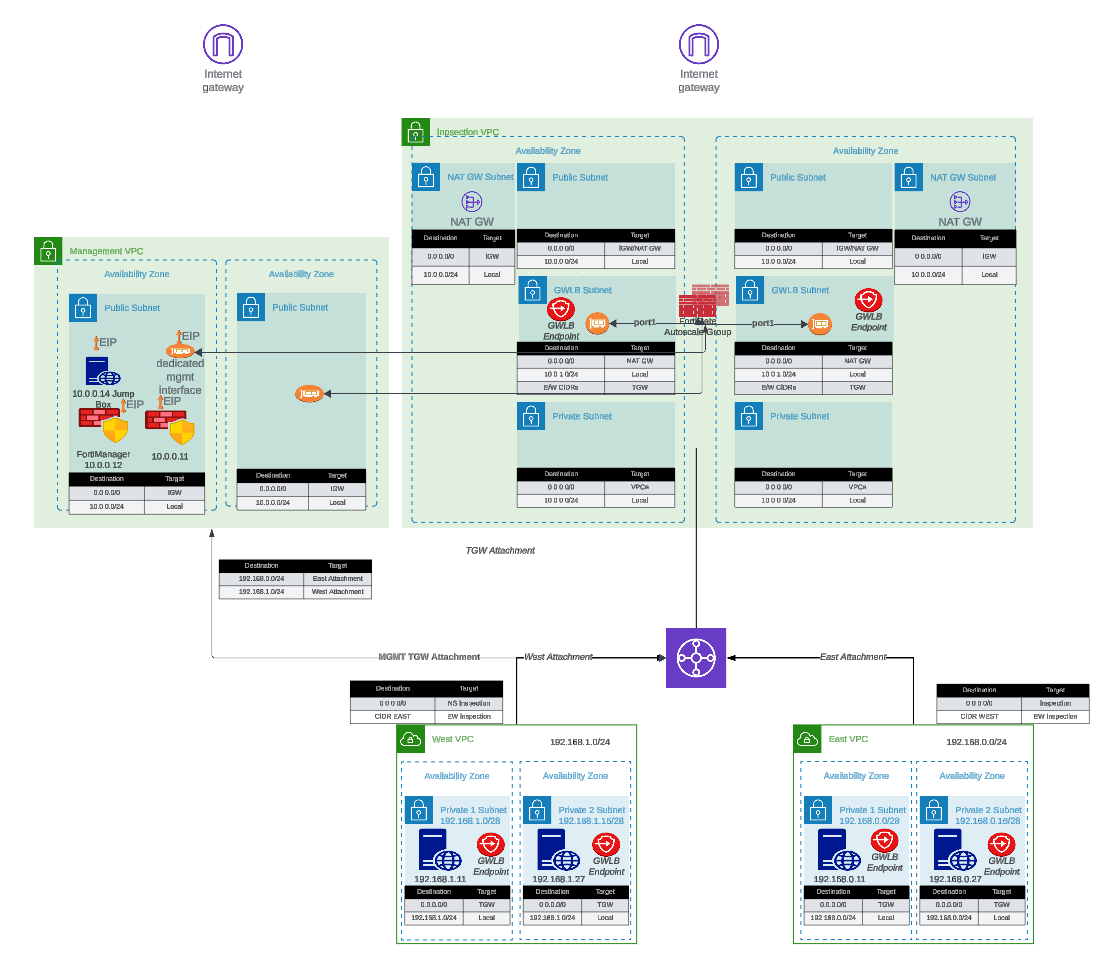

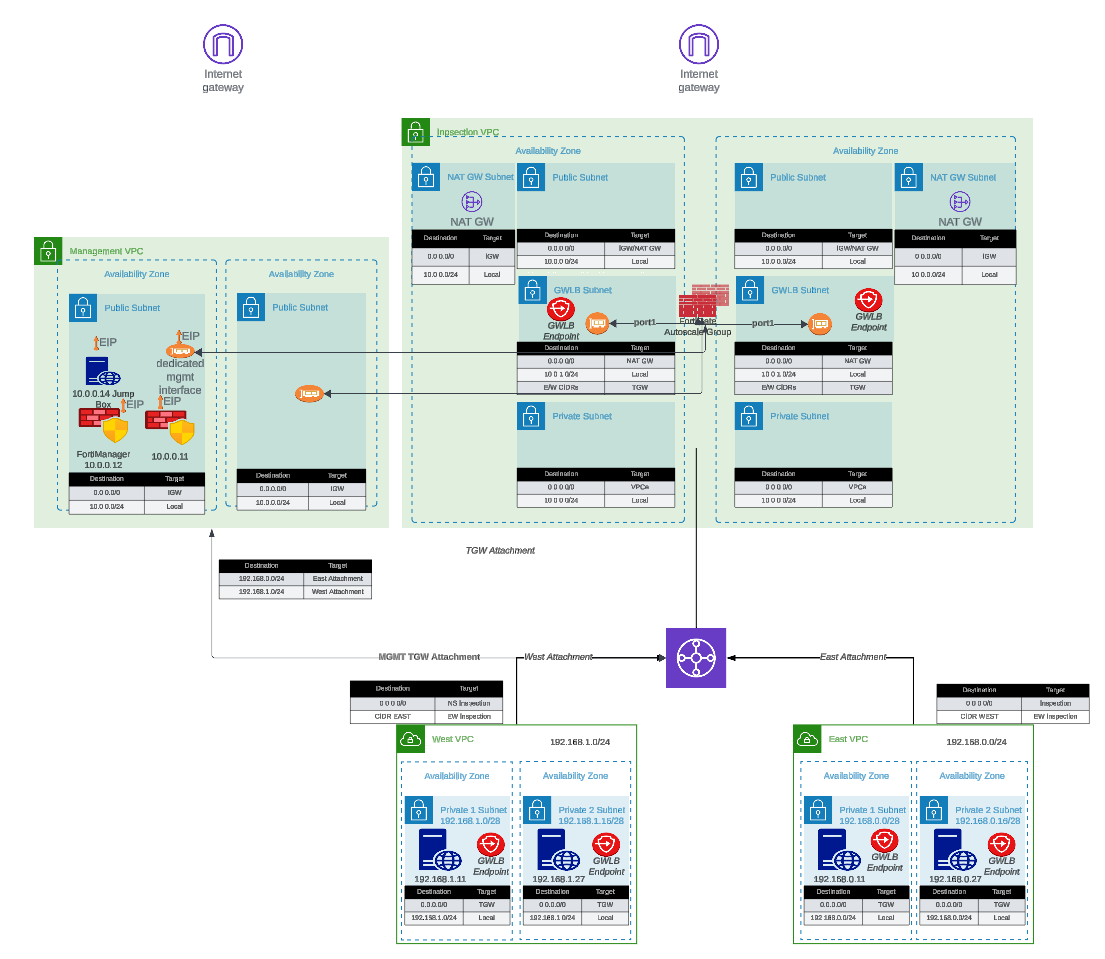

- Centralized Inspection with Transit Gateway: Single inspection VPC serving multiple spoke VPCs through Transit Gateway routing

- Dedicated Management VPC: Isolated management plane for FortiManager/FortiAnalyzer integration with production traffic inspection VPC

- Hybrid Licensing Architectures: Cost-optimized deployments combining BYOL/FortiFlex baseline capacity with PAYG burst capacity

- Existing Infrastructure Integration: Deployment into pre-existing VPCs, subnets, and Transit Gateway environments

How It Works

The Simplified Template approach:

- Variable Abstraction: Translates complex nested map structures into simple boolean flags and direct parameters

- Conditional Logic: Automatically enables or disables features based on use-case selection

- Default Values: Provides production-ready defaults for parameters not requiring customization

- Validation: Implements input validation to catch configuration errors before deployment

- Module Invocation: Dynamically constructs proper syntax for underlying enterprise templates

- Output Standardization: Presents consistent outputs regardless of architecture variation

Prerequisites

Before using this template, ensure you have:

Required Knowledge

- Basic understanding of AWS networking concepts (VPCs, subnets, route tables)

- Familiarity with Terraform workflow (

init,plan,apply,destroy) - General understanding of FortiGate firewall concepts

- AWS account with appropriate permissions for VPC, EC2, Lambda, and IAM resource creation

Required Tools

- Terraform: Version 1.0 or later (Download)

- AWS CLI: Configured with appropriate credentials (Installation Guide)

- Git: For cloning the repository

- Text Editor: For editing

terraform.tfvarsconfiguration files

AWS Resources

- AWS Account: With permissions to create VPCs, subnets, EC2 instances, Lambda functions, and IAM roles

- Service Quotas: Sufficient EC2 instance limits for desired autoscale group size

- S3 Bucket (for BYOL): Storage location for FortiGate license files

- Key Pair: Existing EC2 key pair for SSH access to FortiGate instances

Optional Resources

- FortiManager: For centralized management (if integration is desired)

- FortiAnalyzer: For centralized logging and reporting

- Transit Gateway: If integrating with existing hub-and-spoke architecture

- FortiFlex Account: If using FortiFlex licensing model

Documentation Structure

This guide is organized into the following sections:

- Introduction (this section): Overview, purpose, and prerequisites

- Overview: Architecture patterns, key benefits, and solution capabilities

- Licensing: Detailed comparison of BYOL, PAYG, and FortiFlex licensing options

- Solution Components: In-depth explanation of architectural elements and configuration options

- Templates: Step-by-step deployment procedures and configuration examples

Additional Resources

For comprehensive FortiGate and FortiOS documentation beyond the scope of this deployment guide, please reference:

- FortiGate Documentation Portal: docs.fortinet.com

- FortiGate AWS Deployment Guides: docs.fortinet.com/document/fortigate-public-cloud/

- AWS Marketplace FortiGate Listings: AWS Marketplace

- Fortinet Developer Network (FNDN): fndn.fortinet.net (requires registration)

- FortiGate Administration Guide: docs.fortinet.com/fortigate/admin-guide

- Terraform AWS Cloud Modules Repository: GitHub - fortinetdev/terraform-aws-cloud-modules

Support and Feedback

For technical support:

- Enterprise Template Issues: Report issues on the terraform-aws-cloud-modules GitHub repository

- FortiGate Technical Support: Open support tickets at FortiCare Support Portal

- AWS Infrastructure Issues: Contact AWS Support through your AWS account

For documentation feedback or Simplified Template enhancement requests, please reach out through your Fortinet account team or technical contacts.

Getting Started

Ready to deploy? Proceed to the Overview section to understand the architecture patterns available, or jump directly to the Templates section to begin configuration and deployment.

Is Autoscale Right for You?

Before investing time in this deployment, it is worth asking whether autoscale is the right architecture for your use case. Our general recommendation is to consider intentional scaling — using Terraform to preemptively manage instance count — before committing to an autoscale deployment.

Most customers, once they understand the trade-offs, choose intentional scaling over autoscale. If you can make that case to your customer, you should.

Reasons to Reconsider Autoscale

Scale-Out Latency

A new FortiGate instance takes approximately 4-5 minutes to deploy, license, and become traffic-ready. If the burst event that triggered the scale-out lasts less than 4-5 minutes, the new instance will be ready after the event has already passed. In many cases, autoscale provides no benefit for short-duration bursts.

Scale-In Delay

Once an instance is deployed, the scale-in backoff period is intentionally conservative to avoid prematurely removing capacity. This means you may be paying for instances well after they are needed.

FortiGates Rarely Scale Out in Practice

In practice, FortiGate instances are often faster than the infrastructure they are protecting. The CPU thresholds that trigger scale-out are rarely reached, making the autoscale group effectively a fixed-size deployment with additional complexity. Vertical scaling — choosing a larger instance type — is often a simpler and more effective solution.

Non-Deterministic Traffic Flows

GWLB uses 5-tuple hashing to pin a flow to a specific FortiGate instance, but you have no visibility into which instance is handling a given flow without digging through GWLB flow logs and individual FortiGate session tables. Because instances are ephemeral, the instance that handled a flow may be terminated before you can investigate it. This makes troubleshooting traffic and security events significantly more difficult than in a static deployment.

License Management Complexity

Licenses are assigned dynamically by a Lambda function backed by a DynamoDB table. If Lambda fails or the table falls out of sync, instances can come up unlicensed. Troubleshooting requires inspecting Lambda execution logs, DynamoDB state, and FortiGate registration status simultaneously — none of which are familiar tools for most FortiGate administrators.

Lambda and CloudWatch Observability

Lambda functions orchestrate the entire autoscale lifecycle: instance launch, license assignment, primary instance election, and scale-in cleanup. When something goes wrong, diagnosis requires navigating CloudWatch log groups, correlating Lambda execution events to specific FortiGate instance activity, and understanding the autoscale state machine. This requires a combined skill set of FortiGate administration, AWS networking, and AWS serverless operations.

Upgrade Complexity

Upgrading FortiOS on a running autoscale group is a manual, multi-step process involving AWS Console operations, new launch template versions, and per-instance firmware upgrades through the FortiGate GUI. There is no automated upgrade path.

VPN Limitations

VPN tunnels cannot be terminated on autoscale instances. IPSec requires a stable, known IP address or FQDN for the remote peer to establish a tunnel against. Autoscale instances are ephemeral — their IPs come and go as the group scales. Even if an EIP is attached, IKE/IPSec session state lives on a specific instance. If that instance is terminated, the tunnel drops and must be fully re-negotiated with a replacement instance. If your design requires site-to-site or client VPN termination on the FortiGate, a static deployment or HA pair is the appropriate solution.

Egress IP Non-Determinism

If you use per-instance Elastic IPs for egress rather than a NAT Gateway, the source IP of outbound traffic is non-deterministic. EIPs are associated with ephemeral instances and change as the group scales. If your downstream systems, partners, or compliance requirements depend on a stable egress IP, you must use a NAT Gateway — which adds cost and a fixed point of failure.

When Autoscale Does Make Sense

If your workload has genuine, sustained traffic bursts that exceed what a single instance can handle, and you cannot predict when those bursts will occur, autoscale can be a good fit. The following guidance applies if you proceed:

Maintain at least one instance per Availability Zone. This avoids cross-AZ traffic inspection costs and ensures local capacity is always available without a cold-start delay.

Tune the CloudWatch alarms. The default scale-out threshold is CPU > 80% for two consecutive 120-second periods. If your bursts are shorter than the scale-out latency, adjust the thresholds or disable scale-out entirely and treat the group as a fixed-size deployment.

Enable

primary_scalein_protection. This prevents the primary FortiGate instance — which holds the authoritative configuration — from being selected as a scale-in candidate. Without this, configuration can be lost if the primary is terminated and a new primary election is required.Use a NAT Gateway for egress. Per-instance EIPs result in non-deterministic source IPs as instances come and go. A NAT Gateway provides a stable, predictable egress IP.

Plan licensing carefully. A common pattern is BYOL or FortiFlex for baseline instances (those that never scale in) and PAYG for burst instances. Alternatively, size FortiFlex entitlements to cover the maximum desired capacity. Either way, license capacity must be planned ahead — running out of licenses during a scale-out event leaves new instances unlicensed.

Consider FortiAnalyzer. Given the non-deterministic traffic flow and ephemeral instance nature of autoscale, a central logging and analytics platform is more valuable here than in a static deployment. Without it, correlating security events across instances is very difficult.

The Alternative: Intentional Scaling

If your load patterns are predictable — scheduled batch jobs, business-hours traffic, known maintenance windows — Terraform can manage instance count intentionally and preemptively. You define the capacity you need, when you need it, and Terraform applies it. This approach is simpler to operate, easier to troubleshoot, and avoids the Lambda/DynamoDB/CloudWatch machinery entirely.

This workshop focuses on autoscale deployments. If intentional scaling is a better fit, the FortiGate AWS Autoscale TEC Workshop covers the lower-level components and gives you more direct control over the deployment.

Overview

Introduction

FortiOS natively supports AWS Autoscaling capabilities, enabling dynamic horizontal scaling of FortiGate clusters within AWS environments. This solution leverages AWS Gateway Load Balancer (GWLB) to intelligently distribute traffic across FortiGate instances in the autoscale group. The cluster dynamically adjusts its capacity based on configurable thresholds—automatically launching new instances when the cluster size falls below the minimum threshold and terminating instances when capacity exceeds the maximum threshold. As instances are added or removed, they are seamlessly registered with or deregistered from associated GWLB target groups, ensuring continuous traffic inspection capabilities while maintaining optimal cluster performance and capacity.

Key Benefits

This autoscaling solution delivers several strategic advantages for AWS security architectures:

Elastic Scalability

- Horizontal scaling: Automatically scales FortiGate cluster capacity in response to traffic patterns and resource utilization

- Cost optimization: Scales down during low-traffic periods to reduce operational costs

- Performance assurance: Scales up during peak demand to maintain consistent security inspection throughput

Flexible Licensing Options

- Hybrid licensing model: Supports combination of BYOL (Bring Your Own License), FortiFlex usage-based licensing for baseline capacity, and AWS Marketplace PAYG (Pay-As-You-Go) for elastic burst capacity

- License optimization: Minimize costs by using BYOL/FortiFlex licenses for steady-state workloads and PAYG for temporary scale-out events

- Simplified license management: Automated license token injection during instance launch via Lambda functions

High Availability and Configuration Management

- Automated configuration synchronization: Primary FortiGate instance automatically synchronizes security policies and configuration to secondary instances using FortiOS native HA sync mechanisms

- FortiManager integration: Optional centralized management through FortiManager for policy orchestration, compliance monitoring, and operational visibility across the autoscale group

- Consistent security posture: Configuration drift prevention ensures all instances enforce identical security policies

Architectural Flexibility

- Centralized inspection architecture: Single inspection VPC model with Transit Gateway integration for hub-and-spoke topology

- Distributed inspection architecture: Multiple inspection points for geo-distributed workloads (coming soon)

- Deployment patterns: Support for single-arm (1-ENI) and dual-arm (2-ENI) FortiGate deployments

Internet Egress Options

- Elastic IP (EIP) NAT: Each FortiGate instance can leverage individual EIPs for source NAT, providing consistent egress IP addresses for allowlist scenarios

- NAT Gateway integration: Alternative architecture using shared NAT Gateways for cost-optimized egress traffic when static source IPs are not required

- Hybrid egress design: Combine EIP and NAT Gateway approaches based on application requirements

Architecture Considerations

This simplified template streamlines the deployment of FortiGate autoscale groups by abstracting infrastructure complexity while providing customization options for:

- VPC and subnet configuration

- Licensing strategy selection

- FortiManager/FortiAnalyzer integration

- Network interface design (dedicated management ENI options)

- Scaling policies and thresholds

- Transit Gateway attachment and routing

Additional Solutions

Fortinet offers several complementary AWS security architectures optimized for different use cases:

- FGCP HA (Single AZ): Active-passive high availability within a single Availability Zone for maximum configuration synchronization and stateful failover

- FGCP HA (Multi-AZ): Active-passive high availability across multiple Availability Zones for enhanced resilience

- Transit Gateway with FortiGate inspection: Centralized security inspection for multi-VPC environments

- Distributed Gateway Load Balancer architectures: Regional traffic inspection patterns

For comprehensive information on Fortinet’s AWS security portfolio, deployment guides, and architectural best practices, visit www.fortinet.com/aws.

Licensing

Overview

FortiGate autoscale deployments in AWS support three distinct licensing models, each optimized for different operational requirements, cost structures, and scaling behaviors. The choice of licensing strategy significantly impacts deployment complexity, operational costs, and the ability to dynamically scale capacity in response to demand.

This template supports all three licensing models and enables hybrid licensing configurations where multiple license types coexist within the same autoscale group, providing maximum flexibility for cost optimization and capacity management.

Licensing Options

AWS Marketplace Pay-As-You-Go (PAYG)

Best for: Proof of concepts, temporary workloads, elastic burst capacity

AWS Marketplace PAYG licensing offers the simplest deployment path with zero upfront licensing requirements. Instances are billed hourly through your AWS account based on instance type and included FortiGuard services.

Advantages

- Zero configuration: No license files, tokens, or registration required

- Instant deployment: Instances launch immediately without license provisioning delays

- Elastic scaling: Ideal for autoscale groups that frequently scale out and in

- No commitment: Pay only for actual runtime hours with no long-term contracts

- Consolidated billing: All costs appear on AWS invoices alongside infrastructure charges

Considerations

- Higher per-hour cost: Premium pricing compared to BYOL or FortiFlex over extended periods

- Service bundle locked: Cannot customize FortiGuard service subscriptions; you receive the bundle included with the marketplace offering

- Limited cost optimization: No volume discounts or prepaid savings

- Vendor lock-in: Cannot migrate licenses to on-premises or other cloud providers

When to Use

- Development, testing, and staging environments

- Proof-of-concept deployments with undefined timelines

- Burst capacity in hybrid licensing architectures (scale beyond BYOL/FortiFlex baseline)

- Short-term projects (< 6 months) where simplicity outweighs cost

- Disaster recovery standby capacity that remains dormant most of the time

Implementation Notes

- Select PAYG AMI from AWS Marketplace during launch template configuration

- No Lambda-based license management required

- Instances automatically activate upon boot

- FortiGuard services update immediately without additional registration

Bring Your Own License (BYOL)

Best for: Long-term production deployments with predictable capacity requirements

BYOL licensing leverages perpetual or term-based FortiGate-VM licenses purchased directly from Fortinet or authorized resellers. This model provides the lowest per-instance operating cost for sustained workloads but requires manual license file management.

Advantages

- Lowest operating cost: Significant savings (40-60%) compared to PAYG for long-term deployments

- Custom service bundles: Select specific FortiGuard subscriptions (UTP, ATP, Enterprise) based on security requirements

- Portable licenses: Migrate licenses between environments (AWS, Azure, on-premises) with proper licensing terms

- Volume discounts: Enterprise agreements provide additional cost reductions at scale

- Predictable budgeting: Fixed annual or multi-year costs independent of instance runtime

Considerations

- Manual license management: Requires obtaining, storing, and deploying license files for each instance

- Upfront capital expense: Purchase licenses before deployment

- Reduced flexibility: Fixed license count limits maximum autoscale capacity unless additional licenses are procured

- License tracking overhead: Must maintain inventory of assigned vs. available licenses

- Decommissioning process: Requires license recovery when scaling in or decommissioning environments

When to Use

- Production workloads with predictable, steady-state capacity requirements

- Long-term deployments (> 1 year) where cost savings justify management overhead

- Organizations with existing Fortinet licensing agreements or ELAs

- Environments requiring specific FortiGuard service combinations not available in marketplace offerings

- Hybrid licensing architectures as the baseline capacity tier

Implementation Notes

- Store license files in S3 bucket accessible by Lambda function

- Lambda function reads license files and applies them during instance boot

- Configure

lic_folder_pathvariable to point to license file directory - Naming convention: License files should match naming pattern expected by Lambda (e.g., sequential numbering)

- DynamoDB table tracks license assignments to prevent duplicate usage

- Decommissioned instances return licenses to available pool for reuse

License File Requirements

licenses/

├── FGVM01-001.lic

├── FGVM01-002.lic

├── FGVM01-003.lic

└── FGVM01-004.licCritical: Ensure sufficient licenses exist for asg_max_size. If licenses are exhausted during scale-out, new instances will remain unlicensed and non-functional.

FortiFlex (Usage-Based Licensing)

Best for: Dynamic workloads requiring flexibility with optimized costs for medium to long-term deployments

FortiFlex (formerly Flex-VM) is Fortinet’s consumption-based, points-driven licensing program that combines the flexibility of PAYG with cost structures approaching BYOL. Points are consumed daily based on FortiGate configuration (CPU count, service package), and licenses are dynamically provisioned via API tokens.

Advantages

- Flexible scaling: Provision and deprovision licenses on-demand through API integration

- Optimized costs: 20-40% savings compared to PAYG for sustained workloads

- Automated license lifecycle: Lambda function generates license tokens automatically during instance launch

- Right-sizing capability: Change CPU count or service packages dynamically; pay only for what you consume

- Simplified license management: No physical license files; tokens generated via API calls

- Point pooling: Share point allocations across multiple deployments and cloud providers

- Burst capacity support: Quickly provision additional licenses without procurement delays

Considerations

- Initial setup complexity: Requires FortiFlex program registration, configuration templates, and API integration

- Point management: Monitor point consumption to prevent negative balance or service interruption

- Active entitlement management: Must create/stop entitlements to control costs

- API dependency: Relies on connectivity to FortiFlex API endpoints during instance provisioning

- Grace period risks: Running negative balance triggers 90-day grace period; service stops if not resolved

- Minimum commitment: Some FortiFlex programs require minimum annual consumption

When to Use

- Production workloads with variable but predictable traffic patterns

- Multi-environment deployments (dev, staging, production) sharing point pools

- Organizations pursuing cloud-first strategies without legacy perpetual licenses

- Architectures requiring frequent right-sizing of FortiGate instances

- Deployments spanning multiple cloud providers or hybrid architectures

- Cost-conscious autoscale groups with moderate to high uptime requirements

Implementation Notes

- Register FortiFlex program and purchase point packs via FortiCare portal

- Create FortiGate-VM configurations in FortiFlex portal defining CPU count and service packages

- Generate API credentials through IAM portal with FortiFlex permissions

- Configure Lambda function environment variables with FortiFlex API credentials

- Lambda function creates entitlements and retrieves license tokens during instance launch

- Entitlements automatically STOP when instances terminate, halting point consumption

- Monitor point balance via FortiFlex portal or API to prevent service interruption

FortiFlex Prerequisites

FortiFlex Program Registration:

- Purchase program SKU:

FC-10-ELAVR-221-02-XX(12, 36, or 60 months) - Register program in FortiCare at

https://support.fortinet.com - Wait up to 4 hours for program validation

- Purchase program SKU:

Point Pack Purchase:

- Annual packs:

LIC-ELAVM-10K(10,000 points, 1-year term with rollover) - Multi-year packs:

LIC-ELAVMMY-50K-XX(50,000 points, 3-5 year terms) - Bulk packs:

LIC-ELAVMMY-BULK-SEAT(100,000 points per seat, minimum 10 seats)

- Annual packs:

Configuration Creation:

- Define VM specifications (CPU count, service package, VDOMs)

- Example: 2-CPU FortiGate with UTP bundle = ~6.5 points/day

- Use FortiFlex Calculator to estimate consumption:

https://fndn.fortinet.net/index.php?/tools/fortiflex/

API Access Setup:

- Create IAM permission profile including FortiFlex portal

- Create API user and download credentials

- Obtain API token via authentication endpoint

- Store credentials securely (AWS Secrets Manager recommended)

Point Consumption Examples

| Configuration | Daily Points | Monthly Points (30 days) | Annual Points |

|---|---|---|---|

| 1 CPU, FortiCare Premium | 1.63 | 49 | 595 |

| 2 CPU, UTP Bundle | 6.52 | 196 | 2,380 |

| 4 CPU, ATP Bundle | 26.08 | 782 | 9,519 |

| 8 CPU, Enterprise Bundle | 104.32 | 3,130 | 38,077 |

Note: Actual consumption varies based on specific service selections and VDOM count. Always use the FortiFlex Calculator for accurate estimates.

Hybrid Licensing Architecture

Overview

The autoscale template supports hybrid licensing configurations where multiple license types coexist within separate Auto Scaling Groups (ASGs). This architecture provides cost optimization by using BYOL or FortiFlex for baseline capacity and PAYG for elastic burst capacity.

Architecture Pattern

┌─────────────────────────────────────────────────────┐

│ GWLB Target Group │

│ (Unified) │

└────────┬────────────────────────────────┬───────────┘

│ │

▼ ▼

┌─────────────────┐ ┌─────────────────┐

│ BYOL/FortiFlex │ │ PAYG ASG │

│ ASG │ │ │

│ │ │ │

│ Min: 2 │ │ Min: 0 │

│ Max: 4 │ │ Max: 8 │

│ Desired: 2 │ │ Desired: 0 │

│ │ │ │

│ (Baseline) │ │ (Burst) │

└─────────────────┘ └─────────────────┘Configuration Strategy

Primary ASG (BYOL or FortiFlex):

- Configure with minimum = desired capacity

- Sets baseline capacity for steady-state traffic

- Lower per-instance cost for sustained operation

- Example:

min_size = 2,max_size = 4,desired_capacity = 2

Secondary ASG (PAYG):

- Configure with minimum = 0, desired = 0

- Remains dormant during normal operations

- Scales out only when primary ASG reaches maximum capacity

- Example:

min_size = 0,max_size = 8,desired_capacity = 0

Scaling Coordination:

- Configure CloudWatch alarms with staggered thresholds

- Primary ASG scales at lower CPU threshold (e.g., 60%)

- Secondary ASG scales at higher CPU threshold (e.g., 75%)

- Provides buffer for primary ASG to stabilize before burst scaling

Cost Optimization Example

Scenario: E-commerce application with baseline 4 Gbps throughput, occasional spikes to 12 Gbps

Hybrid Configuration:

Primary: 4x c6i.xlarge (4 vCPUs) with FortiFlex

- Daily points: 4 instances × 26.08 points = 104.32 points/day

- Monthly cost: ~$X (based on point pricing)

- Handles baseline traffic continuously

Secondary: 0-8x c6i.xlarge with PAYG

- Hourly cost: $Y per instance

- Scales only during traffic spikes (estimated 10% of time)

- Monthly cost: 8 instances × $Y/hour × 720 hours × 0.10 = $Z

Savings vs. Pure PAYG: Approximately 35-45% reduction for this traffic pattern

Implementation Notes

- Both ASGs register with same GWLB target group for unified traffic distribution

- Each ASG requires separate launch template with appropriate licensing configuration

- CloudWatch alarms must reference correct ASG names for scaling actions

- Lambda function handles license provisioning independently for each ASG

- Monitor scaling activities to validate primary ASG exhausts capacity before secondary ASG activates

License Selection Decision Tree

START: What is your deployment scenario?

│

├─ POC / Testing / Short-term project (< 6 months)

│ └─ Use: AWS Marketplace PAYG

│ └─ Rationale: Simplicity, no upfront investment, easy teardown

│

├─ Long-term production (> 12 months) with steady-state capacity

│ └─ Do you have existing Fortinet licenses or ELA?

│ ├─ YES → Use: BYOL

│ │ └─ Rationale: Lowest cost, leverage existing investment

│ └─ NO → Use: FortiFlex

│ └─ Rationale: Flexible, better cost than PAYG, no upfront licensing

│

├─ Production with variable traffic patterns

│ └─ Use: Hybrid (FortiFlex + PAYG)

│ └─ Rationale: Baseline cost optimization with elastic burst capacity

│

└─ Multi-environment deployment (dev/staging/prod)

└─ Use: FortiFlex

└─ Rationale: Point pooling across environments, on-demand provisioningBest Practices

General Recommendations

Calculate total cost of ownership (TCO):

- Project instance runtime hours over 12-36 months

- Factor in scaling frequency and burst capacity requirements

- Include license management overhead costs for BYOL

- Use FortiFlex Calculator for accurate point consumption estimates

Start with PAYG for prototyping:

- Validate architecture and sizing before committing to licenses

- Measure actual traffic patterns to inform license type selection

- Convert to BYOL or FortiFlex after requirements stabilize

Implement hybrid licensing for cost optimization:

- Use BYOL/FortiFlex for baseline capacity that runs 24/7

- Use PAYG for burst capacity that scales intermittently

- Monitor scaling patterns monthly and adjust ASG configurations

Automate license lifecycle management:

- Use Lambda functions for automated license provisioning

- Implement DynamoDB tracking for BYOL license assignments

- Enable CloudWatch alarms for FortiFlex point balance monitoring

- Store FortiFlex API credentials in AWS Secrets Manager

BYOL-Specific Best Practices

Maintain license inventory:

- Track assigned vs. available licenses in spreadsheet or CMDB

- Reserve 10-20% buffer above

asg_max_sizefor maintenance windows - Implement automated alerts when available licenses fall below threshold

Standardize license file naming:

- Use consistent naming convention (e.g.,

FGVMXX-001.lic) - Document naming pattern in deployment runbooks

- Ensure Lambda function matches naming pattern logic

- Use consistent naming convention (e.g.,

Test license recovery:

- Verify decommissioned instances return licenses to pool

- Validate DynamoDB table updates correctly

- Practice license recovery procedures before production incidents

FortiFlex-Specific Best Practices

Monitor point consumption actively:

- Review Point Usage reports weekly in FortiFlex portal

- Set up email notifications for low balance (90/60/30 day thresholds)

- Correlate point consumption with CloudWatch ASG metrics

Plan point pack purchases:

- Purchase points early in program year to maximize rollover (annual packs)

- Use multi-year packs for long-term stable deployments to avoid rollover complexity

- Maintain 20-30% buffer above projected consumption

Optimize entitlement lifecycle:

- STOP entitlements immediately after instance termination to halt point consumption

- Use Lambda automation to stop entitlements within minutes of scale-in events

- Review STOPPED entitlements weekly and delete if no longer needed

Right-size FortiGate configurations:

- Start with minimal CPU count and scale up as needed

- Use A La Carte service packages for cost optimization when not all services required

- Adjust configurations quarterly based on actual usage patterns

Troubleshooting

Common Licensing Issues

BYOL: Instances Launch Without License

Symptoms: FortiGate instance boots but no license is applied; limited functionality

Causes:

- License file not found in S3 bucket

- Incorrect

lic_folder_pathvariable - Lambda function lacks S3 permissions

- License file naming doesn’t match Lambda logic

- All licenses already assigned (pool exhausted)

Resolution:

- Verify license files exist in S3 bucket:

aws s3 ls s3://<bucket>/licenses/ - Check Lambda CloudWatch logs for S3 access errors

- Validate IAM role attached to Lambda has

s3:GetObjectpermission - Confirm available licenses exist in DynamoDB tracking table

- Manually apply license via FortiGate CLI:

execute restore config license.lic

FortiFlex: License Token Generation Fails

Symptoms: Instance launches but does not activate; no serial number assigned

Causes:

- FortiFlex API credentials expired or invalid

- Insufficient points in FortiFlex account

- FortiFlex program expired

- Network connectivity issues to FortiFlex API

- Configuration ID not found or deactivated

Resolution:

- Check Lambda CloudWatch logs for API authentication errors

- Verify FortiFlex API credentials:

curltest authentication endpoint - Log into FortiFlex portal and check point balance

- Confirm program status and expiration date

- Verify configuration exists and is active in FortiFlex portal

- Test network connectivity from Lambda to

https://support.fortinet.com

Hybrid Licensing: Secondary ASG Scales Before Primary Exhausted

Symptoms: PAYG instances launch while primary ASG has available capacity

Causes:

- CloudWatch alarm thresholds misconfigured

- Alarm evaluation periods too short

- ASG cooldown periods insufficient

- Stale CloudWatch metrics

Resolution:

- Review CloudWatch alarm configurations for both ASGs

- Increase primary ASG alarm threshold (e.g., 60% → 70%)

- Lower secondary ASG alarm threshold (e.g., 75% → 80%)

- Extend alarm evaluation periods to 3-5 minutes

- Implement alarm dependencies (secondary alarm checks primary ASG size)

License Not Applied After Instance Boot

Symptoms: Instance operational but running in limited mode or showing expired license

Causes:

- User-data script failed during execution

- License injection command syntax error

- Network connectivity issues during boot

- FortiGate version mismatch with license

Resolution:

- SSH to FortiGate instance and check status:

get system status - Review user-data execution logs:

/var/log/cloud-init-output.log - Manually inject license:

- BYOL:

execute restore config tftp <license.lic> <tftp_server> - FortiFlex:

execute vm-license <TOKEN>

- BYOL:

- Verify network connectivity:

execute ping fortiguard.com - Check FortiOS version compatibility with license type

Additional Resources

Official Documentation

- FortiFlex Administration Guide: docs.fortinet.com (search “FortiFlex”)

- FortiGate-VM Licensing Guide: docs.fortinet.com/document/fortigate-vm/

- AWS Marketplace FortiGate Listings: AWS Marketplace

Tools & Calculators

- FortiFlex Points Calculator: fndn.fortinet.net/index.php?/tools/fortiflex/

- AWS Pricing Calculator: calculator.aws (for PAYG cost estimation)

Support Channels

- FortiCare Portal: support.fortinet.com

- FortiFlex Portal: FortiCare > Services > Assets & Accounts > FortiFlex

- Technical Support: Open support ticket for licensing issues

- Sales Team: Contact for enterprise licensing agreements or volume discounts

Summary

Choosing the appropriate licensing model for your FortiGate autoscale deployment requires careful evaluation of deployment duration, traffic patterns, operational complexity tolerance, and budget constraints. This template supports all licensing models and hybrid configurations, enabling you to optimize costs while maintaining the flexibility to adapt to changing requirements.

Quick Selection Guide:

- PAYG: Simplicity matters more than cost; short-term or highly variable workloads

- BYOL: Lowest cost for long-term, predictable capacity; you have existing licenses

- FortiFlex: Balance of flexibility and cost; dynamic workloads without upfront licenses

- Hybrid: Best cost optimization; combine baseline BYOL/FortiFlex with PAYG burst capacity

Solution Components

The FortiGate Autoscale Simplified Template abstracts complex architectural patterns into configurable components that can be enabled or customized through the terraform.tfvars file.

This section provides detailed explanations of each component, configuration options, and architectural considerations to help you design the optimal deployment for your requirements.

What You’ll Learn

This section covers the major architectural elements available in the template:

- Internet Egress Options: Choose between EIP or NAT Gateway architectures

- Firewall Architecture: Understand 1-ARM vs 2-ARM configurations

- Management Isolation: Configure dedicated management ENI and VPC options

- Licensing: Manage BYOL licenses and integrate FortiFlex API

- FortiManager Integration: Enable centralized management and policy orchestration

- Capacity Planning: Configure autoscale group sizing and scaling strategies

- Primary Protection: Implement scale-in protection for configuration stability

- Additional Options: Fine-tune instance specifications and advanced settings

Each component page includes:

- Configuration examples

- Architecture diagrams

- Best practices

- Troubleshooting guidance

- Use case recommendations

Select a component from the navigation menu to learn more about specific configuration options.

Subsections of Solution Components

Internet Egress Options

Overview

The FortiGate autoscale solution provides two distinct architectures for internet egress traffic, each optimized for different operational requirements and cost considerations.

Option 1: Elastic IP (EIP) per Instance

Each FortiGate instance in the autoscale group receives a dedicated Elastic IP address. All traffic destined for the public internet is source-NATed behind the instance’s assigned EIP.

Configuration

access_internet_mode = "eip"Architecture Behavior

In EIP mode, the architecture routes all internet-bound traffic to port2 (the public interface). The route table for the public subnet directs traffic to the Internet Gateway (IGW), where automatic source NAT to the associated EIP occurs.

Advantages

- No NAT Gateway costs: Eliminates monthly NAT Gateway charges ($0.045/hour + data processing)

- Distributed egress: Each instance has independent internet connectivity

- Simplified troubleshooting: Per-instance source IP simplifies traffic flow analysis

- No single point of failure: Loss of one instance’s EIP doesn’t affect others

Considerations

- Unpredictable IP addresses: EIPs are allocated from AWS’s pool; you cannot predict or specify the assigned addresses

- Allowlist complexity: Destinations requiring IP allowlisting must accommodate a pool of EIPs (one per maximum autoscale capacity)

- IP churn during scaling: Scale-out events introduce new source IPs; scale-in events remove them

- Limited EIP quota: AWS accounts have default limits (5 EIPs per region, increased upon request)

Best Use Cases

- Cost-sensitive deployments where NAT Gateway charges exceed EIP allocation costs

- Environments where destination allowlisting is not required

- Architectures prioritizing distributed egress over consistent source IPs

- Development and testing environments with limited budget

Option 2: NAT Gateway

All FortiGate instances share one or more NAT Gateways deployed in public subnets. Traffic is source-NATed to the NAT Gateway’s static Elastic IP address.

Configuration

access_internet_mode = "nat_gw"Architecture Behavior

NAT Gateway mode requires additional subnet and route table configuration. Internet-bound traffic is first routed to the NAT Gateway in the public subnet, which performs source NAT to its static EIP before forwarding to the IGW.

Advantages

- Predictable source IP: Single, stable public IP address for the lifetime of the NAT Gateway

- Simplified allowlisting: Destinations only need to allowlist one IP address (per Availability Zone)

- High throughput: NAT Gateway supports up to 45 Gbps per AZ

- Managed service: AWS handles NAT Gateway scaling and availability

- Independent of FortiGate scaling: Source IP remains constant during scale-in/scale-out events

Considerations

- Additional costs: $0.045/hour per NAT Gateway + $0.045 per GB data processed

- Per-AZ deployment: Multi-AZ architectures require NAT Gateway in each AZ for fault tolerance

- Additional subnet requirements: Requires dedicated NAT Gateway subnet in each AZ

- Route table complexity: Additional route tables needed for NAT Gateway routing

Cost Analysis Example

Scenario: 4 FortiGate instances processing 10 TB/month egress traffic

EIP Mode:

- 4 EIP allocations: $0 (first EIP free, then $0.00/hour per EIP)

- Total monthly: ~$0 (minimal costs)

NAT Gateway Mode (2 AZs):

- 2 NAT Gateways: 2 × $0.045/hour × 730 hours = $65.70

- Data processing: 10,000 GB × $0.045 = $450.00

- Total monthly: $515.70

Decision Point: NAT Gateway makes sense when consistent source IP requirement justifies the additional cost.

Best Use Cases

- Production environments requiring predictable source IPs

- Compliance scenarios where destination IP allowlisting is mandatory

- High-volume egress traffic to SaaS providers with IP allowlisting requirements

- Architectures where operational simplicity outweighs additional cost

Decision Matrix

| Factor | EIP Mode | NAT Gateway Mode |

|---|---|---|

| Monthly Cost | Minimal | $500+ (varies with traffic) |

| Source IP Predictability | Variable (changes with scaling) | Stable |

| Allowlisting Complexity | High (multiple IPs) | Low (single IP per AZ) |

| Throughput | Per-instance limit | Up to 45 Gbps per AZ |

| Operational Complexity | Low | Medium |

| Best For | Dev/test, cost-sensitive | Production, compliance-driven |

Next Steps

After selecting your internet egress option, proceed to Firewall Architecture to configure the FortiGate interface model.

Firewall Architecture

Overview

FortiGate instances can operate in single-arm (1-ARM) or dual-arm (2-ARM) network configurations, fundamentally changing traffic flow patterns through the firewall.

Configuration

firewall_policy_mode = "1-arm" # or "2-arm"2-ARM Configuration (Recommended for Most Deployments)

Architecture Overview

The 2-ARM configuration deploys FortiGate instances with distinct “trusted” (private) and “untrusted” (public) interfaces, providing clear network segmentation.

Traffic Flow:

- Traffic arrives at GWLB Endpoints (GWLBe) in the inspection VPC

- GWLB load-balances traffic across healthy FortiGate instances

- Traffic encapsulated in Geneve tunnels arrives at FortiGate port1 (data plane)

- FortiGate inspects traffic and applies security policies

- Internet-bound traffic exits via port2 (public interface)

- Port2 traffic is source-NATed via EIP or NAT Gateway

- Return traffic follows reverse path back through Geneve tunnels

Interface Assignments

- port1: Data plane interface for GWLB connectivity (Geneve tunnel termination)

- port2: Public interface for internet egress (with optional dedicated management when enabled)

Network Interfaces Visualization

The FortiGate GUI displays both physical interfaces and logical Geneve tunnel interfaces. Traffic inspection occurs on the logical tunnel interfaces, while physical port2 handles egress.

Advantages

- Clear network segmentation: Separate trusted and untrusted zones

- Traditional firewall model: Familiar architecture for network security teams

- Simplified policy creation: North-South policies align with interface direction

- Better traffic visibility: Distinct ingress/egress paths ease troubleshooting

- Dedicated management option: Port2 can be isolated for management traffic

Best Use Cases

- Production deployments requiring clear network segmentation

- Environments with security policies mandating separate trusted/untrusted zones

- Architectures where dedicated management interface is required

- Standard north-south inspection use cases

1-ARM Configuration

Architecture Overview

The 1-ARM configuration uses a single interface (port1) for all data plane traffic, eliminating the need for a second network interface.

Traffic Flow:

- Traffic arrives at port1 encapsulated in Geneve tunnels from GWLB

- FortiGate inspects traffic and applies security policies

- Traffic is hairpinned back through the same Geneve tunnel it arrived on

- Traffic returns to originating distributed VPC through GWLB

- Distributed VPC uses its own internet egress path (IGW/NAT Gateway)

This “bump-in-the-wire” architecture is the typical 1-ARM pattern for distributed inspection, where the FortiGate provides security inspection but traffic egresses from the spoke VPC, not the inspection VPC.

Important Behavior: Stateful Load Balancing

GWLB Statefulness: The Gateway Load Balancer maintains connection state tables for traffic flows.

Primary Traffic Pattern (Distributed Architecture):

- ✅ Traffic enters via Geneve tunnel → FortiGate inspection → Hairpins back through same Geneve tunnel

- ✅ Distributed VPC handles actual internet egress via its own IGW/NAT Gateway

- ✅ This “bump-in-the-wire” model provides security inspection without routing traffic through inspection VPC

Key Requirement: Symmetric routing through the GWLB. Traffic must return via the same Geneve tunnel it arrived on to maintain proper state table entries.

Info

Centralized Egress Architecture (Transit Gateway Pattern)

In centralized egress deployments with Transit Gateway, the traffic flow is fundamentally different and represents the primary use case for internet egress through the inspection VPC:

Traffic Flow:

- Spoke VPC traffic routes to Transit Gateway

- TGW routes traffic to inspection VPC

- Traffic enters GWLBe (same AZ to avoid cross-AZ charges)

- GWLB forwards traffic through Geneve tunnel to FortiGate

- FortiGate inspects traffic and applies security policies

- Traffic exits port1 (1-ARM) or port2 (2-ARM) toward internet

- Egress via EIP or NAT Gateway in inspection VPC

- Response traffic returns via same interface to same Geneve tunnel

This is the standard architecture for centralized internet egress where:

- All spoke VPCs route internet-bound traffic through the inspection VPC

- FortiGate autoscale group provides centralized security inspection AND NAT

- Single egress point simplifies security policy management and reduces costs

- Requires careful route table configuration to maintain symmetric routing

When to use: Centralized egress architectures where spoke VPCs do NOT have their own internet gateways.

Note

Distributed Architecture - Alternative Pattern (Advanced Use Case)

In distributed architectures where spoke VPCs have their own internet egress, it is possible (but not typical) to configure traffic to exit through the inspection VPC instead of hairpinning:

- Traffic enters via Geneve tunnel → Exits port1 to internet → Response returns via port1 to same Geneve tunnel

This pattern requires:

- Careful route table configuration in the inspection VPC

- Specific firewall policies on the FortiGate

- Proper symmetric routing to maintain GWLB state tables

This is rarely used in distributed architectures since spoke VPCs typically handle their own egress. The standard bump-in-the-wire pattern (hairpin through same Geneve tunnel) is recommended when spoke VPCs have internet gateways.

Interface Assignments

- port1: Combined data plane (Geneve) and egress (internet) interface

Advantages

- Reduced complexity: Single interface simplifies routing and subnet allocation

- Lower costs: Fewer ENIs to manage and potential for smaller instance types

- Simplified subnet design: Only requires one data subnet per AZ

Considerations

- Hairpinning pattern: Traffic typically hairpins back through same Geneve tunnel

- Higher port1 bandwidth requirements: All traffic flows through single interface (both directions)

- Limited management options: Cannot enable dedicated management ENI in true 1-ARM mode

- Symmetric routing requirement: All traffic must egress and return via port1 for proper state table maintenance

Best Use Cases

- Cost-optimized deployments with lower throughput requirements

- Simple north-south inspection without management VPC integration

- Development and testing environments

- Architectures where simplified subnet design is prioritized

Comparison Matrix

| Factor | 1-ARM | 2-ARM |

|---|---|---|

| Interfaces Required | 1 (port1) | 2 (port1 + port2) |

| Network Complexity | Lower | Higher |

| Cost | Lower | Slightly higher |

| Management Isolation | Not available | Available |

| Traffic Pattern | Hairpin (distributed) or egress (centralized) | Clear ingress/egress separation |

| Best For | Simple deployments, cost optimization | Production, clear segmentation |

Next Steps

After selecting your firewall architecture, proceed to Dedicated Management ENI to learn about management plane isolation options.

Management Isolation Options

Overview

The FortiGate autoscale solution provides multiple approaches to isolating management traffic from data plane traffic, ranging from shared interfaces to complete physical network separation.

This page covers three progressive levels of management isolation, allowing you to choose the appropriate security posture for your deployment requirements.

Option 1: Combined Data + Management (Default)

Architecture Overview

In the default configuration, port2 serves dual purposes:

- Data plane: Internet egress for inspected traffic (in 2-ARM mode)

- Management plane: GUI, SSH, SNMP access

Configuration

enable_dedicated_management_eni = false

enable_dedicated_management_vpc = falseCharacteristics

- Simplest configuration: No additional interfaces or VPCs required

- Lower cost: Minimal infrastructure overhead

- Shared security groups: Same rules govern data and management traffic

- Single failure domain: Management access tied to data plane availability

When to Use

- Development and testing environments

- Proof-of-concept deployments

- Budget-constrained projects

- Simple architectures without compliance requirements

Option 2: Dedicated Management ENI

Architecture Overview

Port2 is removed from the data plane and dedicated exclusively to management functions. FortiOS configures the interface with set dedicated-to management, placing it in an isolated VRF with independent routing.

Configuration

enable_dedicated_management_eni = trueHow It Works

- Dedicated-to attribute: FortiOS configures port2 with

set dedicated-to management - Separate VRF: Port2 is placed in an isolated VRF with independent routing table

- Policy restrictions: FortiGate prevents creation of firewall policies using port2

- Management-only traffic: GUI, SSH, SNMP, and FortiManager/FortiAnalyzer connectivity

FortiOS Configuration Impact

The dedicated management ENI can be verified in the FortiGate GUI:

The interface shows the dedicated-to: management attribute and separate VRF assignment, preventing data plane traffic from using this interface.

Important Compatibility Notes

Warning

Critical Limitation: 2-ARM + NAT Gateway + Dedicated Management ENI

When combining:

firewall_policy_mode = "2-arm"access_internet_mode = "nat_gw"enable_dedicated_management_eni = true

Port2 will NOT receive an Elastic IP address. This is a valid configuration, but imposes connectivity restrictions:

- ❌ Cannot access FortiGate management from public internet

- ✅ Can access via private IP through AWS Direct Connect or VPN

- ✅ Can access via management VPC (see Option 3 below)

If you require public internet access to the FortiGate management interface with NAT Gateway egress, either:

- Use

access_internet_mode = "eip"(assigns EIP to port2) - Use dedicated management VPC with separate internet connectivity (Option 3)

- Implement AWS Systems Manager Session Manager for private connectivity

Characteristics

- Clear separation of concerns: Management traffic isolated from data plane

- Independent security policies: Separate security groups for management interface

- Enhanced security posture: Reduces attack surface on management plane

- Moderate complexity: Requires additional subnet and routing configuration

When to Use

- Production deployments requiring management isolation

- Security-conscious environments

- Architectures without dedicated management VPC

- Compliance requirements for management plane separation

Option 3: Dedicated Management VPC (Full Isolation)

Architecture Overview

The dedicated management VPC provides complete physical network separation by deploying FortiGate management interfaces in an entirely separate VPC from the data plane.

Configuration

enable_dedicated_management_vpc = true

dedicated_management_vpc_tag = "your-mgmt-vpc-tag"

dedicated_management_public_az1_subnet_tag = "your-az1-subnet-tag"

dedicated_management_public_az2_subnet_tag = "your-az2-subnet-tag"Benefits

- Physical network separation: Management traffic never traverses inspection VPC

- Independent internet connectivity: Management VPC has dedicated IGW or VPN

- Centralized management infrastructure: FortiManager and FortiAnalyzer deployed in management VPC

- Separate security controls: Management VPC security groups independent of data plane

- Isolated failure domains: Management VPC issues don’t affect data plane

Management VPC Creation Options

Option A: Created by existing_vpc_resources Template (Recommended)

The existing_vpc_resources template creates the management VPC with standardized tags that the simplified template automatically discovers.

Advantages:

- Management VPC lifecycle independent of inspection VPC

- FortiManager/FortiAnalyzer persistence across inspection VPC redeployments

- Separation of concerns for infrastructure management

Default Tags (automatically created):

Configuration (terraform.tfvars):

enable_dedicated_management_vpc = true

dedicated_management_vpc_tag = "acme-test-management-vpc"

dedicated_management_public_az1_subnet_tag = "acme-test-management-public-az1-subnet"

dedicated_management_public_az2_subnet_tag = "acme-test-management-public-az2-subnet"Option B: Use Existing Management VPC

If you have an existing management VPC with custom tags, configure the template to discover it:

Configuration:

enable_dedicated_management_vpc = true

dedicated_management_vpc_tag = "my-custom-mgmt-vpc-tag"

dedicated_management_public_az1_subnet_tag = "my-custom-mgmt-public-az1-tag"

dedicated_management_public_az2_subnet_tag = "my-custom-mgmt-public-az2-tag"The template uses these tags to locate the management VPC and subnets via Terraform data sources.

Behavior When Enabled

When enable_dedicated_management_vpc = true:

- Automatic ENI creation: Template creates dedicated management ENI (port2) in management VPC subnets

- Implies dedicated management ENI: Automatically sets

enable_dedicated_management_eni = true - VPC peering/TGW: Management VPC must have connectivity to inspection VPC for HA sync

- Security group creation: Appropriate security groups created for management traffic

Network Connectivity Requirements

Management VPC → Inspection VPC Connectivity:

- Required for FortiGate HA synchronization between instances

- Typically implemented via VPC peering or Transit Gateway attachment

- Must allow TCP port 443 (HA sync), TCP 22 (SSH), ICMP (health checks)

Management VPC → Internet Connectivity:

- Required for FortiGuard services (signature updates, licensing)

- Required for administrator access to FortiGate management interfaces

- Can be via Internet Gateway, NAT Gateway, or AWS Direct Connect

Characteristics

- Highest security posture: Complete physical isolation

- Greatest flexibility: Independent infrastructure lifecycle

- Higher complexity: Requires VPC peering or TGW configuration

- Additional cost: Separate VPC infrastructure and data transfer charges

When to Use

- Enterprise production deployments

- Strict compliance requirements (PCI-DSS, HIPAA, etc.)

- Multi-account AWS architectures

- Environments with dedicated management infrastructure

- Organizations with existing management VPCs for network security appliances

Comparison Matrix

| Factor | Combined (Default) | Dedicated ENI | Dedicated VPC |

|---|---|---|---|

| Security Isolation | Low | Medium | High |

| Complexity | Lowest | Medium | Highest |

| Cost | Lowest | Low | Medium |

| Management Access | Via data plane interface | Via dedicated interface | Via separate VPC |

| Failure Domain Isolation | No | Partial | Complete |

| VPC Peering Required | No | No | Yes |

| Compliance Suitability | Basic | Good | Excellent |

| Best For | Dev/test, simple deployments | Production, security-conscious | Enterprise, compliance-driven |

Decision Tree

Use this decision tree to select the appropriate management isolation level:

1. Is this a production deployment?

├─ No → Combined Data + Management (simplest)

└─ Yes → Continue to question 2

2. Do you have compliance requirements for management plane isolation?

├─ No → Dedicated Management ENI (good balance)

└─ Yes → Continue to question 3

3. Do you have existing management VPC infrastructure?

├─ Yes → Dedicated Management VPC (leverage existing)

└─ No → Evaluate cost/benefit:

├─ High security requirements → Dedicated Management VPC

└─ Moderate requirements → Dedicated Management ENIDeployment Patterns

Pattern 1: Dedicated ENI + EIP Mode

firewall_policy_mode = "2-arm"

access_internet_mode = "eip"

enable_dedicated_management_eni = true- Port2 receives EIP for public management access

- Suitable for environments without management VPC

- Simplified deployment with direct internet management access

Pattern 2: Dedicated ENI + Management VPC

firewall_policy_mode = "2-arm"

access_internet_mode = "nat_gw"

enable_dedicated_management_vpc = true

dedicated_management_vpc_tag = "my-mgmt-vpc"- Port2 connects to separate management VPC

- Management VPC has dedicated internet gateway or VPN connectivity

- Preferred for production environments with strict network segmentation

Pattern 3: Combined Management (Default)

firewall_policy_mode = "2-arm"

access_internet_mode = "eip"

enable_dedicated_management_eni = false- Port2 remains in data plane

- Management access shares public interface with egress traffic

- Simplest configuration but lacks management plane isolation

Best Practices

- Enable dedicated management ENI for production: Provides clear separation of concerns

- Use dedicated management VPC for enterprise deployments: Optimal security posture

- Document connectivity requirements: Ensure operations teams understand access paths

- Test connectivity before production: Verify alternative access methods work

- Plan for failure scenarios: Ensure backup access methods (SSM, VPN) are available

- Use existing_vpc_resources template for management VPC: Separates lifecycle management

- Document tag conventions: Ensure consistent tagging across environments

- Monitor management interface health: Set up CloudWatch alarms for management connectivity

Troubleshooting

Issue: Cannot access FortiGate management interface

Check:

- Security groups allow inbound traffic on management port (443, 22)

- Route tables provide path from your location to management interface

- If using dedicated management VPC, verify VPC peering or TGW is operational

- If using NAT Gateway mode, verify you have alternative access method (VPN, Direct Connect)

Issue: Management interface has no public IP

Cause: Using access_internet_mode = "nat_gw" with dedicated management ENI

Solutions:

- Switch to

access_internet_mode = "eip"to receive public IP on port2 - Enable

enable_dedicated_management_vpc = truewith separate internet connectivity - Use AWS Systems Manager Session Manager for private access

- Configure VPN or Direct Connect for private network access

Issue: HA sync not working with dedicated management VPC

Check:

- VPC peering or TGW attachment is configured between management and inspection VPCs

- Security groups allow TCP 443 between FortiGate instances

- Route tables in both VPCs have routes to each other’s subnets

- Network ACLs permit required traffic

Next Steps

After configuring management isolation, proceed to Licensing Options to choose between BYOL, FortiFlex, or PAYG.

Licensing Options

Overview

The FortiGate autoscale solution supports three distinct licensing models, each optimized for different use cases, cost structures, and operational requirements. You can use a single licensing model or combine them in hybrid configurations for optimal cost efficiency.

Licensing Model Comparison

| Factor | BYOL | FortiFlex | PAYG |

|---|---|---|---|

| Total Cost (12 months) | Lowest | Medium | Highest |

| Upfront Investment | High | Medium | None |

| License Management | Manual (files) | API-driven | None |

| Flexibility | Low | High | Highest |

| Capacity Constraints | Yes (license pool) | Soft (point balance) | None |

| Best For | Long-term, predictable | Variable, flexible | Short-term, simple |

| Setup Complexity | Medium | High | Lowest |

Option 1: BYOL (Bring Your Own License)

Overview

BYOL uses traditional FortiGate-VM license files that you purchase from Fortinet or resellers. The template automates license distribution through S3 bucket storage and Lambda-based assignment.

Configuration

asg_license_directory = "asg_license"

asg_byol_asg_min_size = 2

asg_byol_asg_max_size = 4Directory Structure Requirements

Place BYOL license files in the directory specified by asg_license_directory:

terraform/autoscale_template/

├── terraform.tfvars

├── asg_license/

│ ├── FGVM01-001.lic

│ ├── FGVM01-002.lic

│ ├── FGVM01-003.lic

│ └── FGVM01-004.licAutomated License Assignment

- Terraform uploads

.licfiles to S3 duringterraform apply - Lambda retrieves available licenses when instances launch

- DynamoDB tracks assignments to prevent duplicates

- Lambda injects license via user-data script

- Licenses return to pool when instances terminate

Critical Capacity Planning

Warning

License Pool Exhaustion

Ensure your license directory contains at minimum licenses equal to asg_byol_asg_max_size.

What happens if licenses are exhausted:

- New BYOL instances launch but remain unlicensed

- Unlicensed instances operate at 1 Mbps throughput

- FortiGuard services will not activate

- If PAYG ASG is configured, scaling continues using on-demand instances

Recommended: Provision 20% more licenses than max_size

Characteristics

- ✅ Lowest total cost: Best value for long-term (12+ months)

- ✅ Predictable costs: Fixed licensing regardless of usage

- ⚠️ License management: Requires managing physical files

- ⚠️ Upfront investment: Must purchase licenses in advance

When to Use

- Long-term production (12+ months)

- Predictable, steady-state workloads

- Existing FortiGate BYOL licenses

- Cost-conscious deployments

Option 2: FortiFlex (Usage-Based Licensing)

Overview

FortiFlex provides consumption-based, API-driven licensing. Points are consumed daily based on configuration, offering flexibility and cost optimization compared to PAYG.

Prerequisites

- Register FortiFlex Program via FortiCare

- Purchase Point Packs

- Create Configurations in FortiFlex portal

- Generate API Credentials via IAM

For detailed setup, see Licensing Section.

Configuration

fortiflex_username = "xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx"

fortiflex_password = "xxxxxxxxxxxxxxxxxxxxx"

fortiflex_sn_list = ["FGVMELTMxxxxxxxx"]

fortiflex_configid_list = ["My_4CPU_Config"]Warning

FortiFlex Serial Number List - Optional

- If defined: Use entitlements from specific programs only

- If omitted: Use any available entitlements with matching configurations

Important: Entitlements must be created manually in FortiFlex portal before deployment.

Obtaining Required Values

1. API Username and Password:

- Navigate to Services > IAM in FortiCare

- Create permission profile with FortiFlex Read/Write access

- Create API user and download credentials

- Username is UUID in credentials file

2. Serial Number List:

- Navigate to Services > Assets & Accounts > FortiFlex

- View your FortiFlex programs

- Note serial numbers from program details

3. Configuration ID List:

- In FortiFlex portal, go to Configurations

- Configuration ID is the Name field you assigned

Match CPU counts:

fgt_instance_type = "c6i.xlarge" # 4 vCPUs

fortiflex_configid_list = ["My_4CPU_Config"] # Must matchWarning

Security Best Practice

Never commit FortiFlex credentials to version control. Use:

- Terraform Cloud sensitive variables

- AWS Secrets Manager

- Environment variables:

TF_VAR_fortiflex_username - HashiCorp Vault

Lambda Integration Behavior

At instance launch:

- Lambda authenticates to FortiFlex API

- Creates new entitlement under specified configuration

- Receives and injects license token

- Instance activates, point consumption begins

At instance termination:

- Lambda calls API to STOP entitlement

- Point consumption halts immediately

- Entitlement preserved for reactivation

Troubleshooting

Problem: Instances don’t activate license

- Check Lambda CloudWatch logs for API errors

- Verify FortiFlex portal for failed entitlements

- Confirm network connectivity to FortiFlex API

Problem: “Insufficient points” error

- Check point balance in FortiFlex portal

- Purchase additional point packs

- Verify configurations use expected CPU counts

Characteristics

- ✅ Flexible consumption: Pay only for what you use

- ✅ No license file management: API-driven automation

- ✅ Lower cost than PAYG: Typically 20-40% less

- ⚠️ Point-based: Requires monitoring consumption

- ⚠️ API credentials: Additional security considerations

When to Use

- Variable workloads with unpredictable scaling

- Development and testing

- Short to medium-term (3-12 months)

- Burst capacity in hybrid architectures

Option 3: PAYG (Pay-As-You-Go)

Overview

PAYG uses AWS Marketplace on-demand instances with licensing included in hourly EC2 charge.

Configuration

asg_ondemand_asg_min_size = 0

asg_ondemand_asg_max_size = 4

asg_ondemand_asg_desired_size = 0How It Works

- Accept FortiGate-VM AWS Marketplace terms

- Lambda launches instances using Marketplace AMI

- FortiGate activates automatically via AWS

- Hourly licensing cost added to EC2 charge

Characteristics

- ✅ Simplest option: Zero license management

- ✅ No upfront commitment: Pay per running hour

- ✅ Instant availability: No license pool constraints

- ⚠️ Highest hourly cost: Premium pricing for convenience

When to Use

- Proof-of-concept and evaluation

- Very short-term (< 3 months)

- Burst capacity in hybrid architectures

- Zero license administration requirement

Cost Comparison Example

Scenario: 2 FortiGate-VM instances (c6i.xlarge, 4 vCPU, UTP) running 24/7

| Duration | BYOL | FortiFlex | PAYG | Winner |

|---|---|---|---|---|

| 1 month | $2,730 | $1,030 | $1,460 | FortiFlex |

| 3 months | $4,190 | $3,090 | $4,380 | FortiFlex |

| 12 months | $10,760 | $12,360 | $17,520 | BYOL |

| 24 months | $19,520 | $24,720 | $35,040 | BYOL |

Note: Illustrative costs. Actual pricing varies by term and bundle.

Hybrid Licensing Strategies

Strategy 1: BYOL Baseline + PAYG Burst (Recommended)

# BYOL for baseline

asg_license_directory = "asg_license"

asg_byol_asg_min_size = 2

asg_byol_asg_max_size = 4

# PAYG for burst

asg_ondemand_asg_max_size = 4Best for: Production with occasional spikes

Strategy 2: FortiFlex Baseline + PAYG Burst

# FortiFlex for flexible baseline

fortiflex_configid_list = ["My_4CPU_Config"]

asg_byol_asg_max_size = 4

# PAYG for burst

asg_ondemand_asg_max_size = 4Best for: Variable workloads with unpredictable spikes

Strategy 3: All BYOL (Cost-Optimized)

asg_license_directory = "asg_license"

asg_byol_asg_min_size = 2

asg_byol_asg_max_size = 6

asg_ondemand_asg_max_size = 0Best for: Stable, predictable workloads

Strategy 4: All PAYG (Simplest)

asg_byol_asg_max_size = 0

asg_ondemand_asg_min_size = 2

asg_ondemand_asg_max_size = 8Best for: POC, short-term, extreme variability

Decision Tree

1. Expected deployment duration?

├─ < 3 months → PAYG

├─ 3-12 months → FortiFlex or evaluate costs

└─ > 12 months → BYOL + PAYG burst

2. Workload predictable?

├─ Yes, stable → BYOL

└─ No, variable → FortiFlex or Hybrid

3. Want to manage license files?

├─ No → FortiFlex or PAYG

└─ Yes, for cost savings → BYOL

4. Tolerance for complexity?

├─ Low → PAYG

├─ Medium → FortiFlex

└─ High (cost focus) → BYOLBest Practices

- Calculate TCO: Use comparison matrix for your scenario

- Start simple: Begin with PAYG for POC, optimize for production

- Monitor costs: Track consumption via CloudWatch and FortiFlex reports

- Provision buffer: 20% more licenses/entitlements than max_size

- Secure credentials: Never commit FortiFlex credentials to git

- Test assignment: Verify Lambda logs show successful injection

- Plan exhaustion: Configure PAYG burst as safety net

- Document strategy: Ensure ops team understands hybrid configs

Next Steps

After configuring licensing, proceed to FortiManager Integration for centralized management.

FortiManager Integration

Overview

The template supports optional integration with FortiManager for centralized management, policy orchestration, and configuration synchronization across the autoscale group.

Configuration

Enable FortiManager integration by setting the following variables in terraform.tfvars:

enable_fortimanager_integration = true

fortimanager_ip = "10.0.100.50"

fortimanager_sn = "FMGVM0000000001"

fortimanager_vrf_select = 1Variable Definitions

| Variable | Type | Required | Description |

|---|---|---|---|

enable_fortimanager_integration | boolean | Yes | Master switch to enable/disable FortiManager integration |

fortimanager_ip | string | Yes | FortiManager IP address or FQDN accessible from FortiGate management interfaces |

fortimanager_sn | string | Yes | FortiManager serial number for device registration |

fortimanager_vrf_select | number | No | VRF ID for routing to FortiManager (default: 0 for global VRF) |

How FortiManager Integration Works

When enable_fortimanager_integration = true:

- Lambda generates FortiOS config: Lambda function creates

config system central-managementstanza - Primary instance registration: Only the primary FortiGate instance registers with FortiManager

- VDOM exception configured: Lambda adds

config system vdom-exceptionto prevent central-management config from syncing to secondaries - Configuration synchronization: Primary instance syncs configuration to secondary instances via FortiGate-native HA sync

- Policy deployment: Policies deployed from FortiManager propagate through primary → secondary sync

Generated FortiOS Configuration

Lambda automatically generates the following configuration on the primary instance only:

config system vdom-exception

edit 0

set object system.central-management

next

end

config system central-management

set type fortimanager

set fmg 10.0.100.50

set serial-number FMGVM0000000001

set vrf-select 1

endSecondary instances do not receive central-management configuration, preventing:

- Orphaned device entries on FortiManager during scale-in events

- Confusion about which instance is authoritative for policy

- Unnecessary FortiManager license consumption

Network Connectivity Requirements

FortiGate → FortiManager:

- TCP 541: FortiManager to FortiGate communication (FGFM protocol)

- TCP 514 (optional): Syslog if logging to FortiManager

- HTTPS 443: FortiManager GUI access for administrators

Ensure:

- Security groups allow traffic from FortiGate management interfaces to FortiManager

- Route tables provide path to FortiManager IP

- Network ACLs permit required traffic

- VRF routing configured if using non-default VRF

VRF Selection

The fortimanager_vrf_select parameter specifies which VRF to use for FortiManager connectivity:

Common scenarios:

0(default): Use global VRF; FortiManager accessible via default routing table1or higher: Use specific management VRF; FortiManager accessible via separate routing domain

When to use non-default VRF:

- FortiManager in separate management VPC requiring VPC peering or TGW